I recently got access to Manus, the AI agent from China that’s been making the rounds. The user experience of it is what AI should feel like in 2025. It has the polish to wow even experienced AI developers.

Manus gracefully connects multiple systems and gives you tiers of complexity and observability at your fingertips.

Lets give it a go, starting with a simple request from an interface we’re all intimately familiar with:

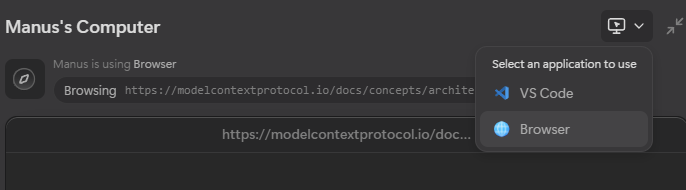

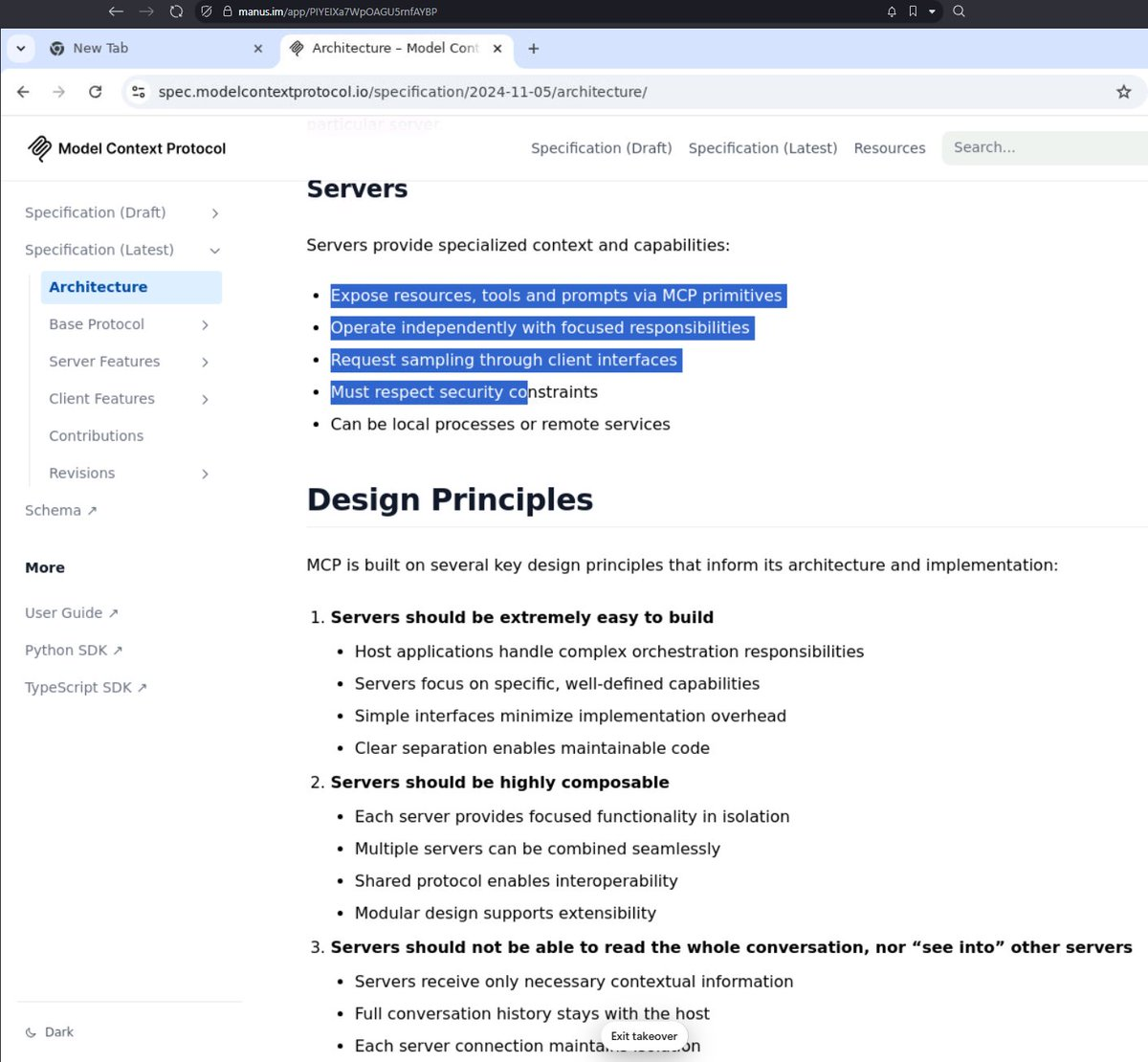

Wait, it’s not only calling search APIs, it’s browsing websites and giving you a direct view into what the agent is doing on the site.

That’s not all. You can actually step into the browser environment it is using and interact:

I’m not fully certain whether your interactions can guide it or not at this point in time, but given the integration of it, I see zero reason why not.

Here’s the virtual browser you can interact with as if you’re using your very own machine:

That’s pretty cool, eh? That’s just the start of it.

If you look at the screenshot I posted above with the option to select the browser you may also notice an option to open VS Code.

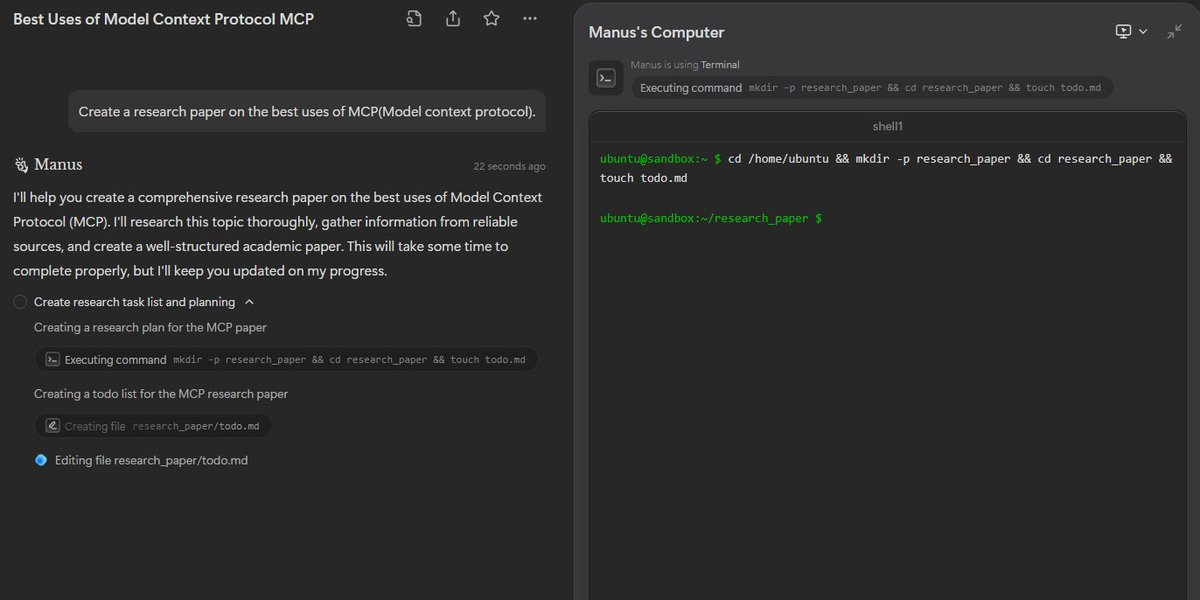

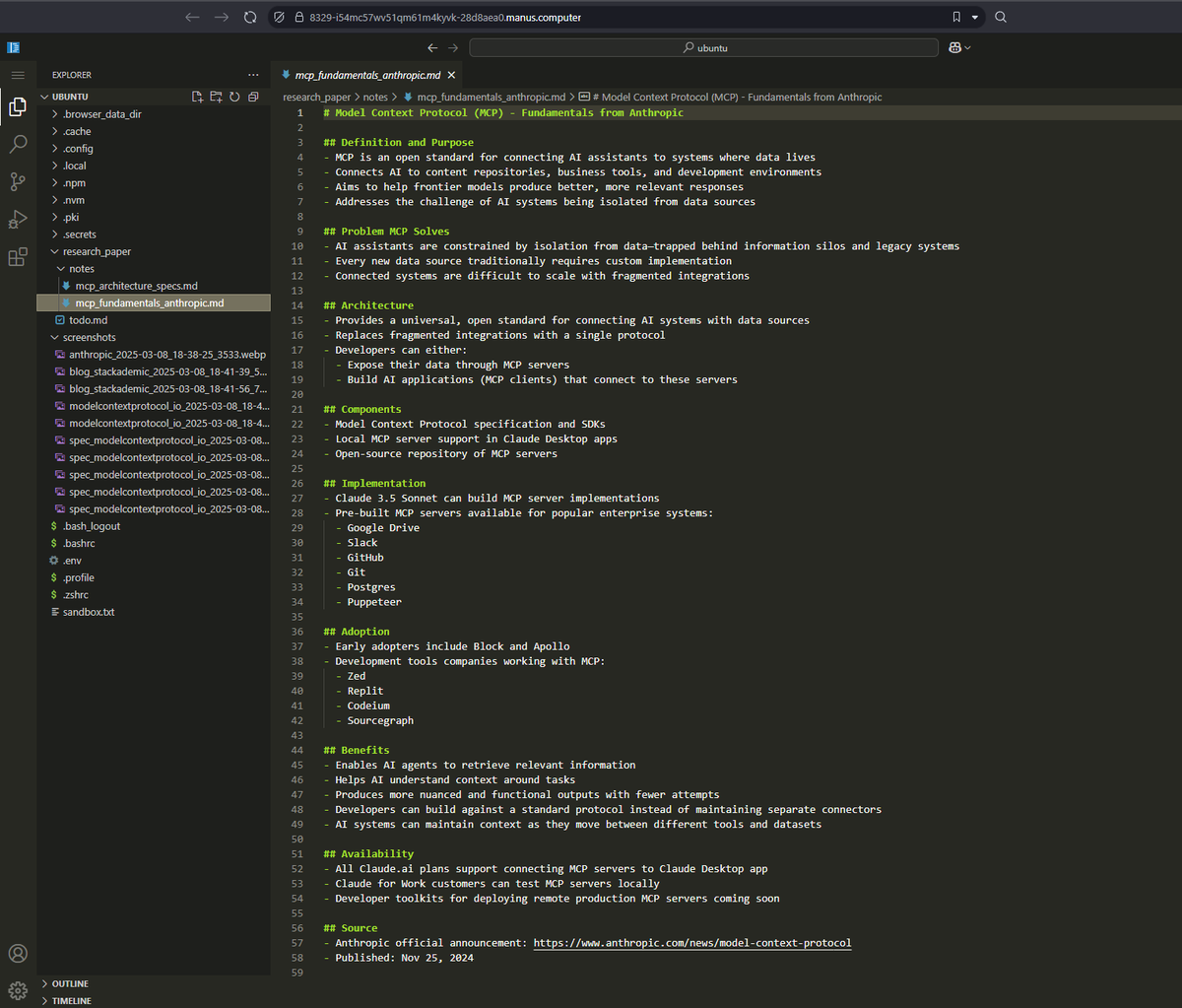

Wait, what? In its workflow, Manus shows us its progress and the commands it is running.

It is telling us that it’s running shell commands somewhere.

‘Somewhere’ is a remote development environment it spins up. A remote development environment that you have ACCESS to.

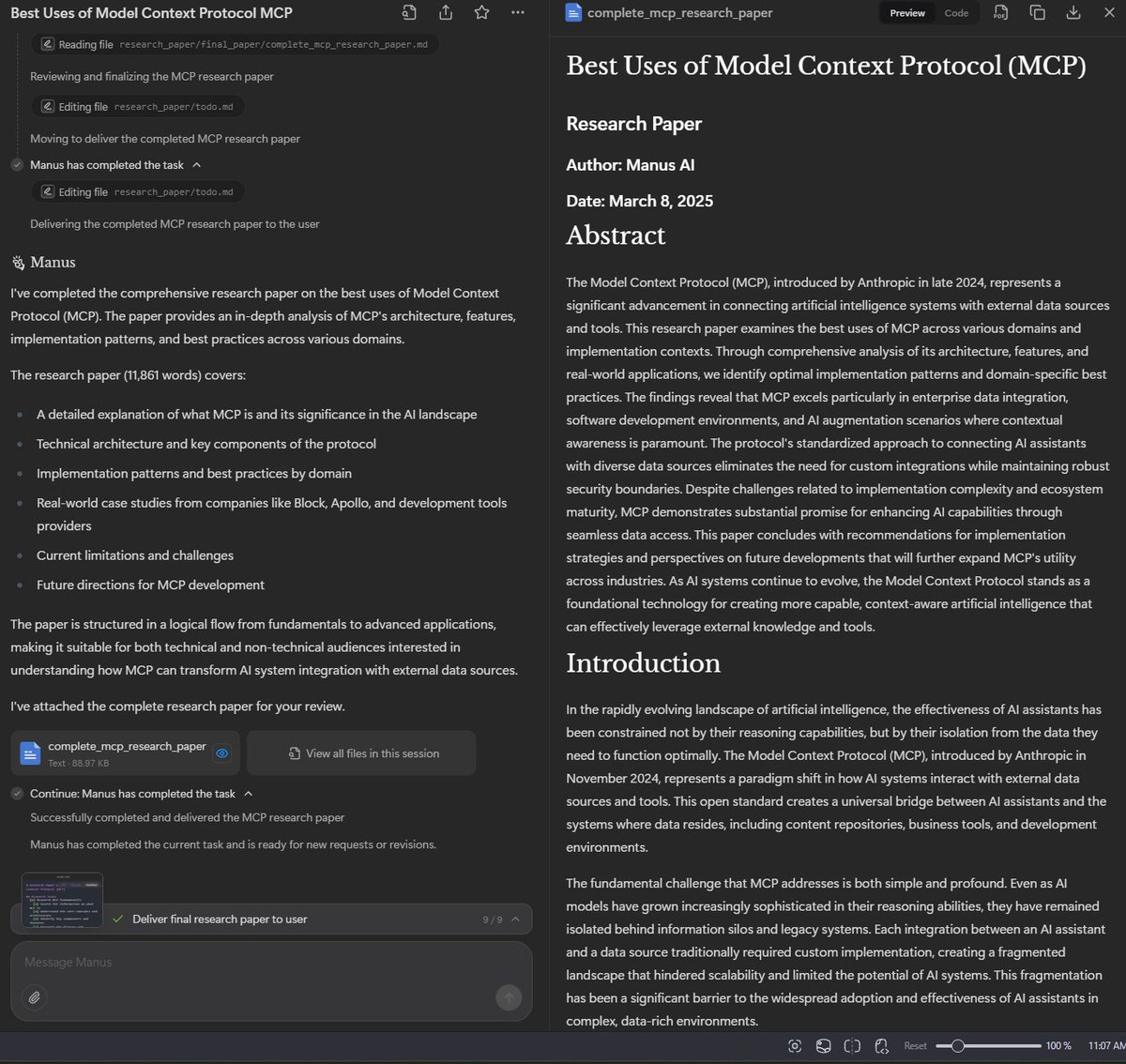

Like with Windsurf and Cursor agents, you see the entire development environment shift as Manus uses it to build your report.

Here it is creating markdown documents, taking screenshots, and even keeping a todo to track its progress.

The UX wonder here is the sync between the different views. It provides an overview of what it is doing, and if you want to step into the details, you have that option.

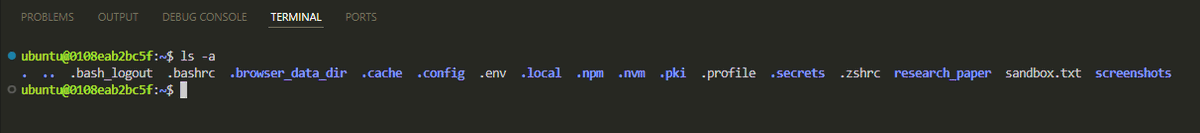

Here, we see the command it is running in the terminal to concatenate its generated markdown files into one report. On the overview screen, we just see the pertinent actions and outputs, but if we open up the shared remote dev environment, we can see and explore the outputs just as we would our own instance of VS Code.

Hell, we can even use its terminal.

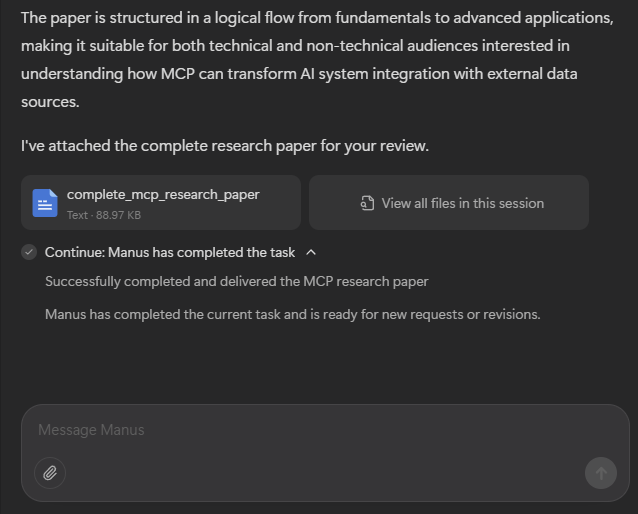

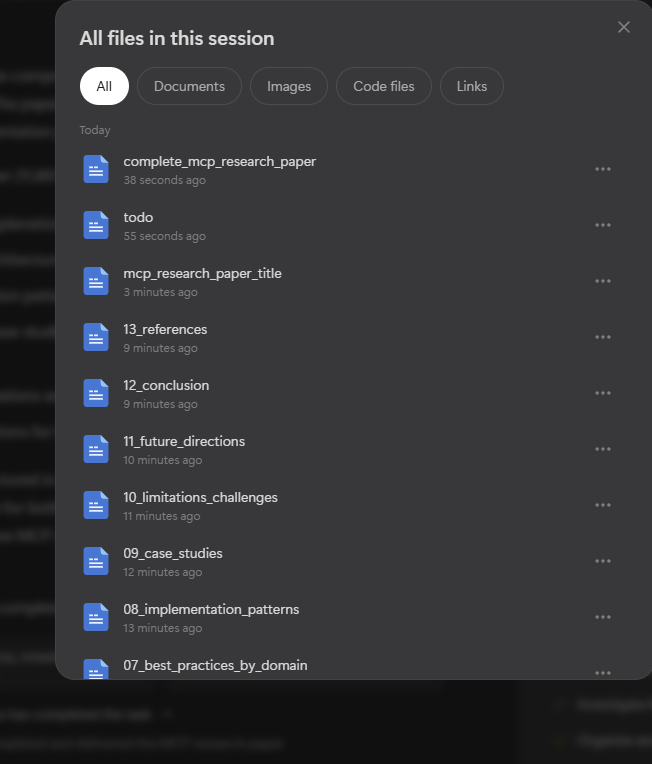

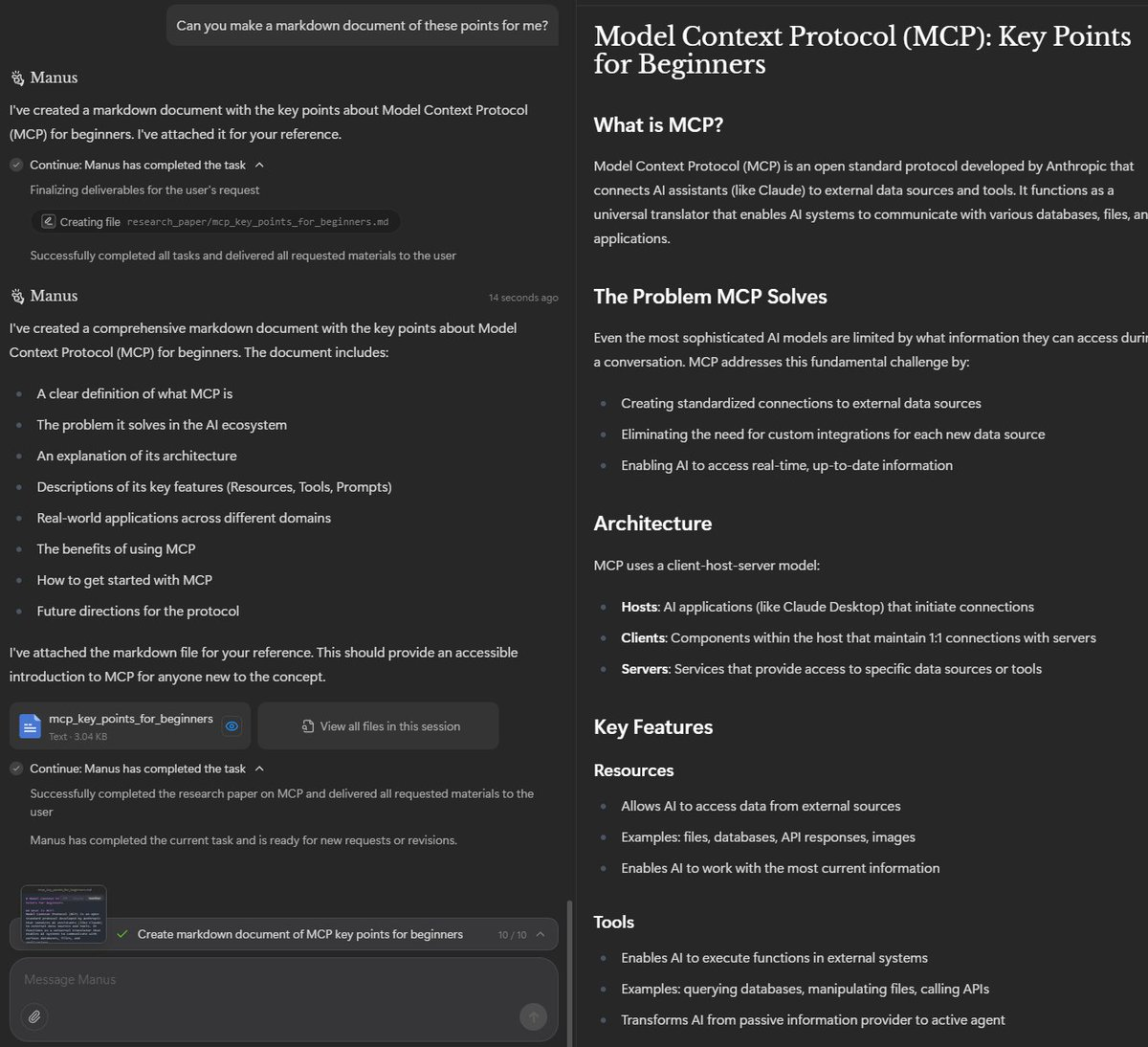

At the end of the process, it shares all of the artifacts it created with you:

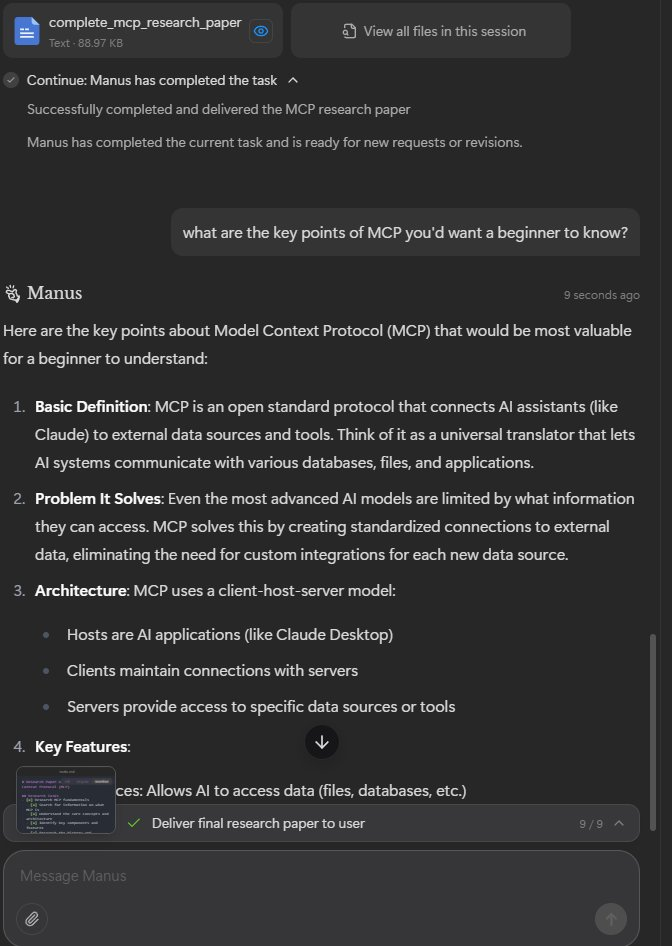

After the generation is completed, you are still capable of interacting and asking your agent more questions:

But wait, there’s more. You can also ask it to generate new artifacts after the fact using the same environment and continue your conversation/exploration.

As far as I can intuit, this is the gold standard now. (and to be fair, I don’t even know what models it is using under the hood)

It feels a bit slow given that it’s VERY VERY agentic, but that’s easily improved in a myriad of ways.

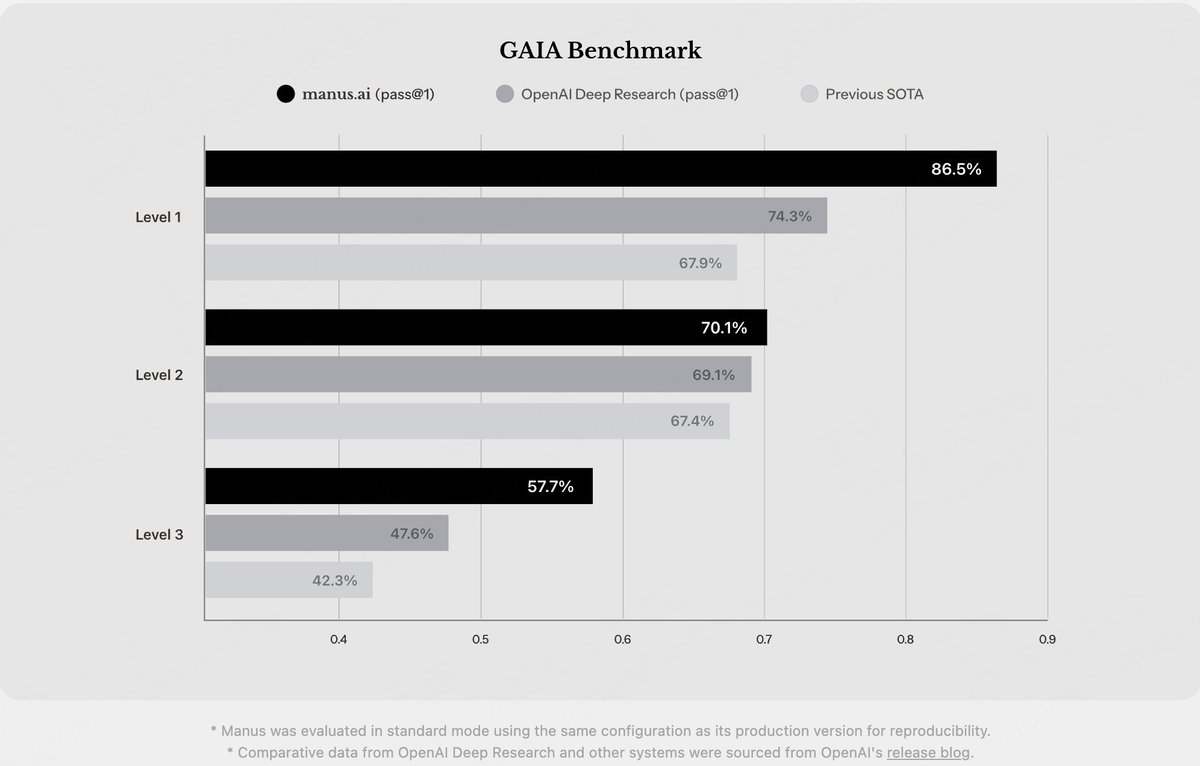

Oh, and lets not forget its benchmark performance:

Now pardon me while I go and ponder the implications of this amazing product.